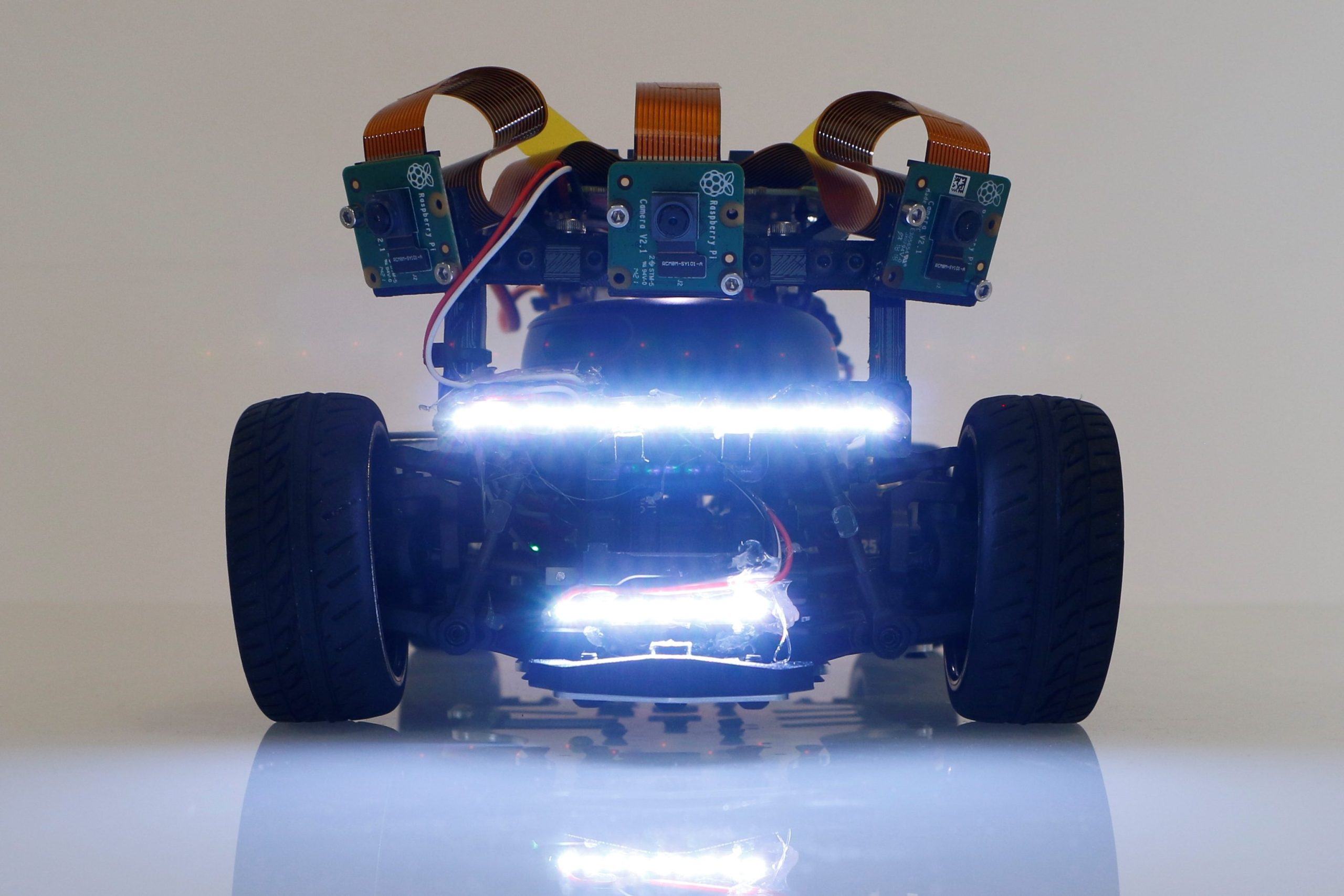

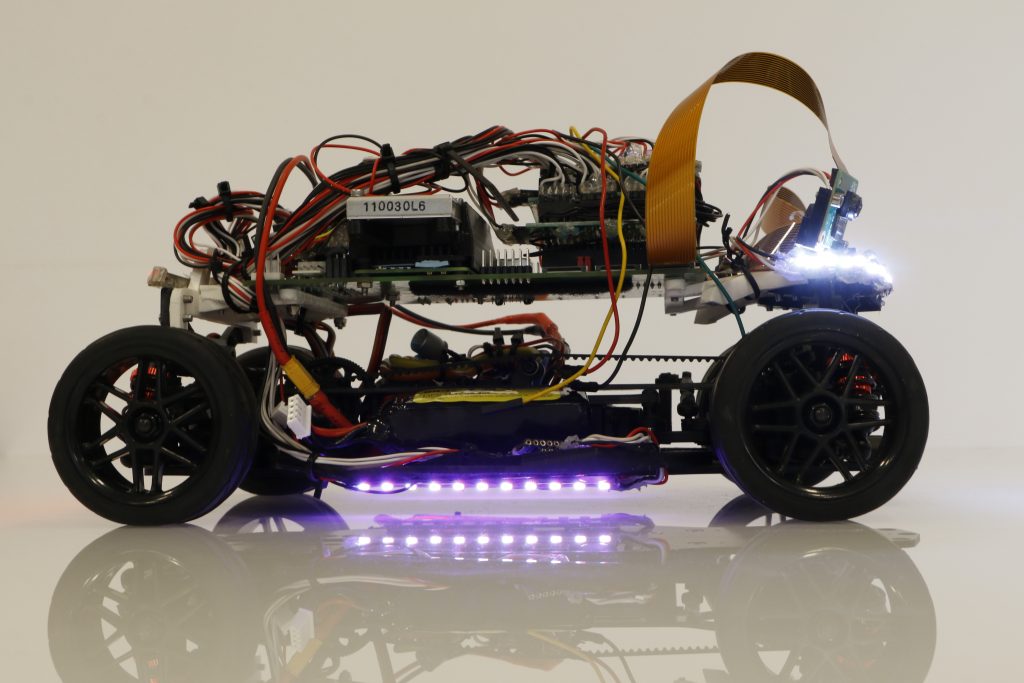

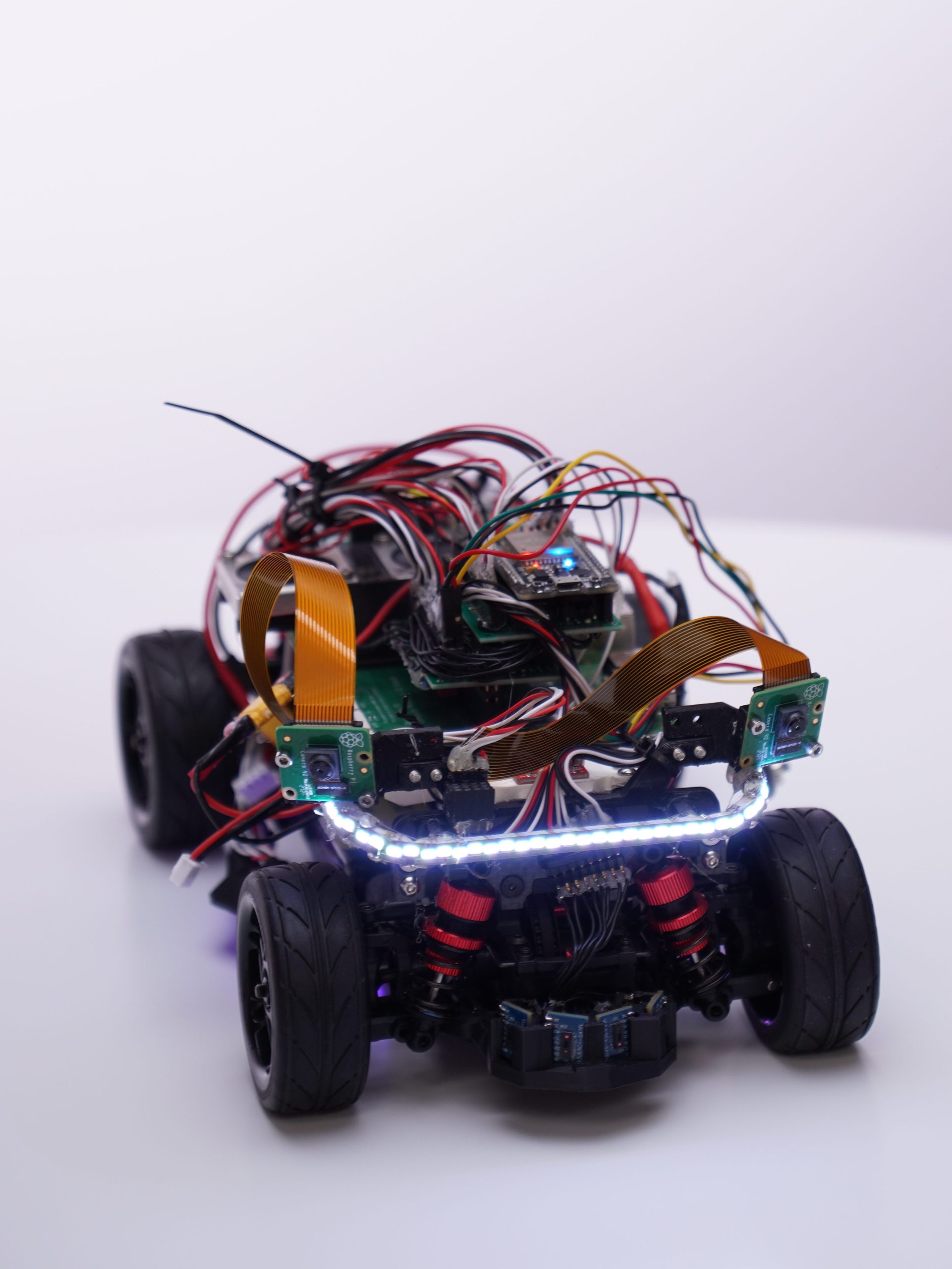

GEN 1

The first generation of our autonomous car

Why we developed GEN 1

After we had made the decision that we wanted to participate, the question arose with which robot system we wanted to master the challenge of autonomous driving? In the past, we always enjoyed working with the EV3 and Lego technology. However, we encountered limitations, especially with regard to programming with EV3’s own programming language. For this reason, we decided to take on a new challenge and leave the EV3 world behind. With this decision to turn our backs on the Mindstorms cosmos, however, we were now confronted with the question of which of the numerous robot systems available on the market we would choose.

Gen 1

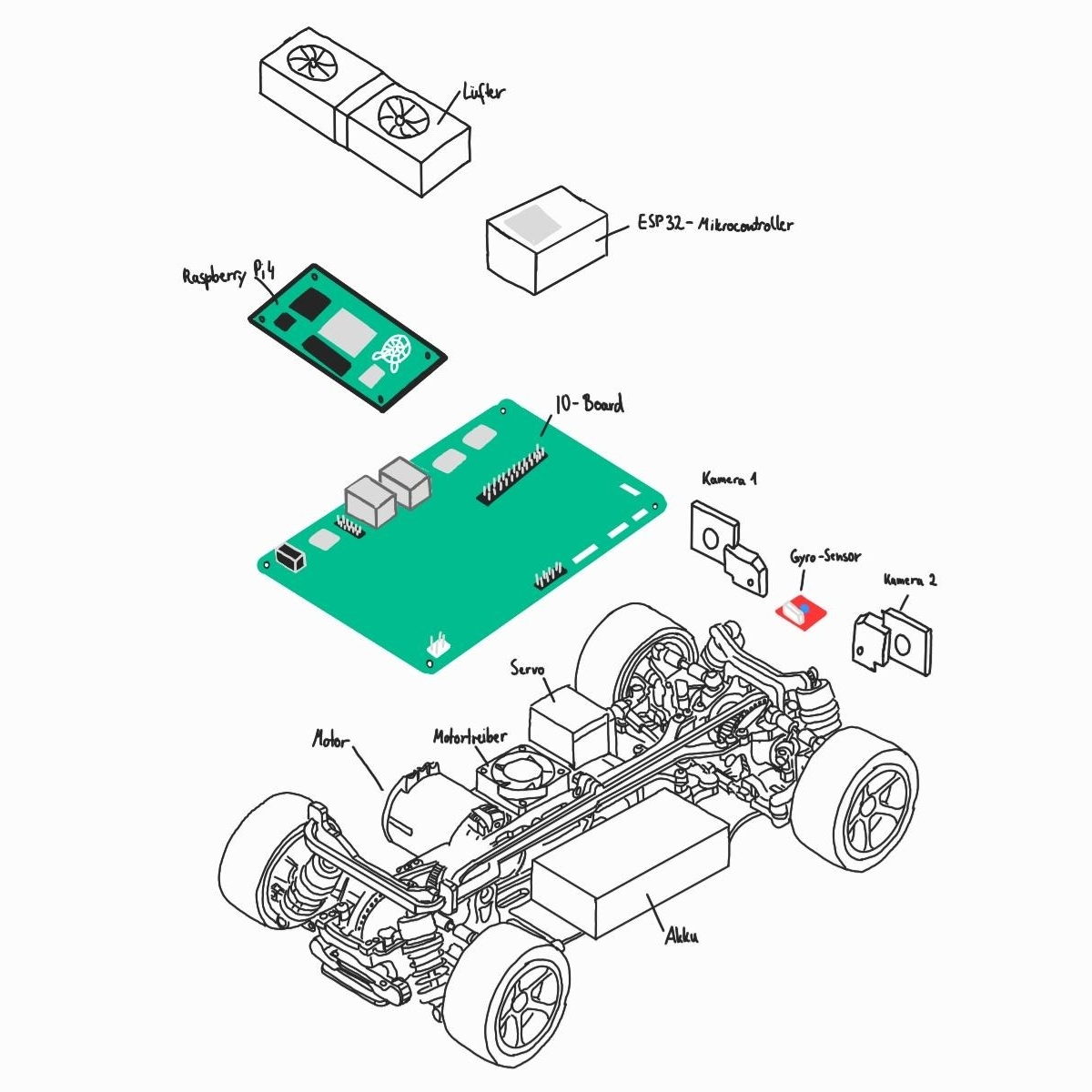

Features

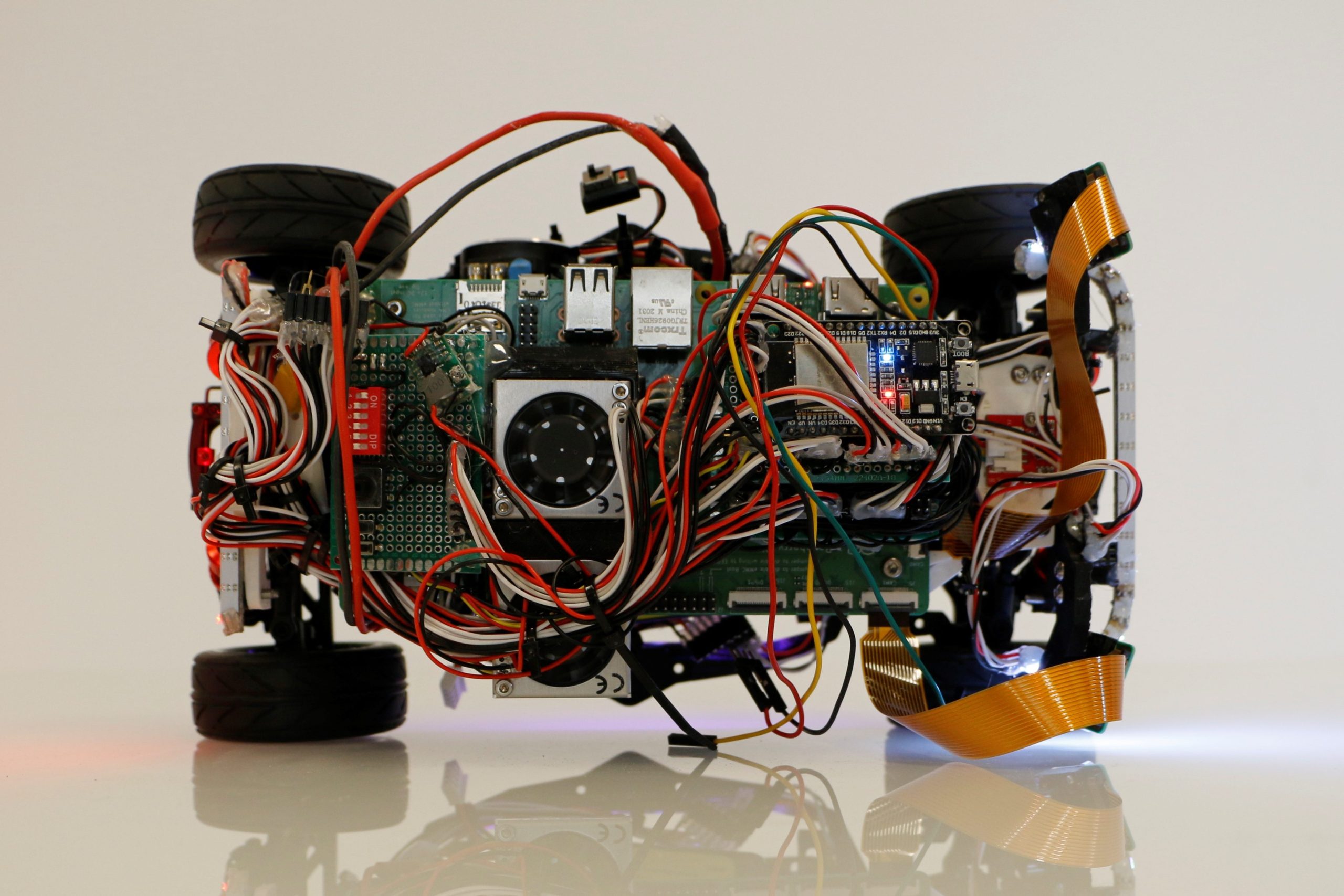

- Raspberry Pi Compute Module 4

- ESP 32

- 2 cameras

- MPU 6050

- AWD

- up to 12 Lidar sensors

First, we thought about designing the robot car from scratch using CAD and additive manufacturing. However, it quickly became clear to us that this would unfortunately not have been possible in addition to our studies. That’s why we chose a remote-controlled car as the chassis of our robot car. As an EV3 brick replacement we worked from now on with a Raspberry Pi CM4. Furthermore, the car has two Raspberry Pi cameras, which we use for autonomous driving and the detection of obstacles. In order for the drive and servo motor to be reasonably controlled by the Raspberry Pi, we had to determine that an ESP 32 microcontroller was necessary, as the PWM (pulse width modulation) signal would otherwise not have been constant, which would have caused the servo motor to tremble.

The process of building the fist GEN we have documented in a video on our YouTube channel. In the video the fist driven rounds are also recorded.

GitHub

More details about the development of the first GEN, as well as technical details are available on our GitHub. You can also find parts of our code and other interesting documents there.

Autonomous driving and obstacle detection

When we were finished with the construction process of the car, we were able to move on to the actual tasks of autonomous driving and obstacle detection. The first idea we had for orientation on the pitch was to use lidar sensors, which we installed at the front and sides of the car. Lidar sensors are already being installed in motor vehicles for autonomous driving, as they can create an image of the environment.Because the lidar sensors work with infrared light, we realized that this was not compatible with the black band of the playing field, so we had to discard the idea of lidar sensors.

The alternative we focused on from then on was to use the cameras. We work with two cameras because they allow us to create a larger aperture angle. With the help of the cameras, we are able to detect the boards and the obstacles, i.e. the main components of our program consist of image recognition algorithms.

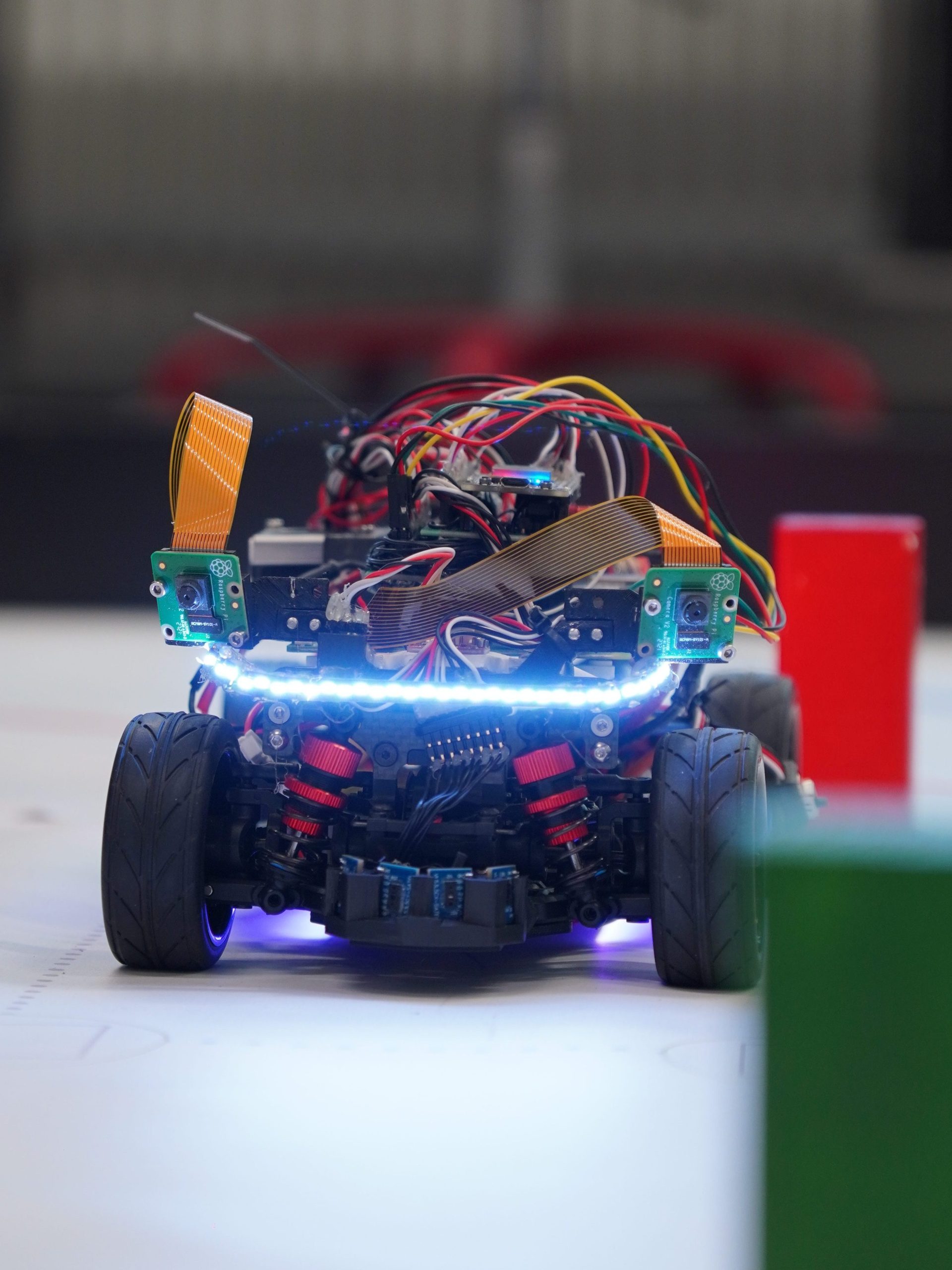

Preliminary round Dortmund

Due to our numerous participations since the 5th grade at the WRO, we know that the actual competition day is always something very special. Not only because it is a beautiful event in a foreign city, but also because on this day the intensive work of the last weeks and months was put to the test.

Often we went to the competition with a very good feeling, and then unfortunately had to realize that we had been wrong, because the robot suddenly no longer did what it should, e.g. due to the local conditions, which influence the sensors differently than during preparation. Accordingly, we learned over the years to deal with surprises on the day of the competition. We had a similar experience at our preliminary round in Dortmund in June. At home and before the competitions on site, everything runs perfectly. But then comes the decisive run, where our car suddenly got stuck on the board after the first corner, which is of course very annoying and frustrating at this moment. But that, too, is part of the WRO.

Nevertheless, we were lucky that we were able to qualify for the German final as 1st place. We are very much looking forward to this new challenge, because in Dortmund we identified the following problems that needed to be solved:

- Too large a turning radius, which meant that the car drove the corners too big and could not avoid the obstacles flexibly enough.

- The perimeter recognition, which we implemented by means of image recognition, was error-prone on site, because the image of the camera was overexposed, so that the black band could no longer be recognized.